Wrist Point Detection (Building from scratch)

Aim:

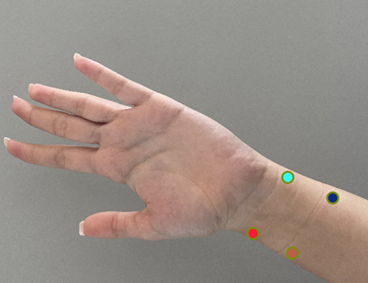

Write a deep learning algorithm/ architecture to detect the (x, y) coordinates of the wrist points as shown in the snapshot below for real-time application.

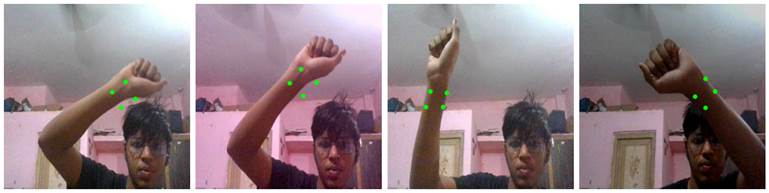

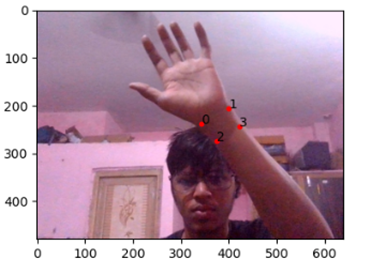

Final Output:

We could have done this using MediaPipe or other tools, but I wanted to do from scratch.

Dataset:

80 images were taken and manually annotated and later using Data Augmentation Pipeline per image 45 augmented images were created for each.

· Test

· Train

· Val

Total no. of

images 3600 images in each folder

Each folder contains Images and Labels subfolders.

Initially the image size from camera was 640 x 480, later it was reduced to 250 x 250.

The label was also transformed and made compatible with 250 x 250 and was normalized.

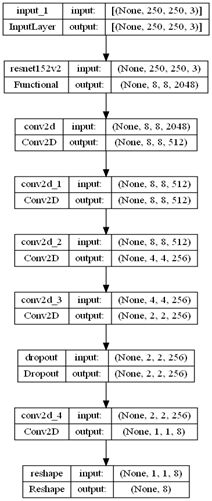

Architecture:

Architecture:

The input shape was 250 x 250.

Used Transfer Learning over ResNet152V2 and adjusted

the weights.

And the output shape was one dimensional with 8 values.

8 values represented the

X1, y1, x2, y2, x3, y3, x4, y4 coordinates 4 points mentioned.

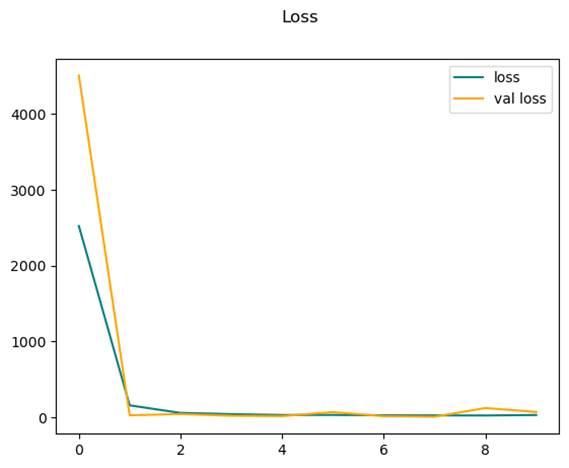

Learning Graph:

The model converged after 2nd epoch, and I haven’t implemented EarlyStopping so it remain consistent for 10 epochs.

Challenges:

The real challenge was creating the dataset and annotation the dataset and what kind of annotation to do, so after doing some research I settled with Keyframe annotation and used the keyframe to save in csv and later converted into Json.

Normalization was a bit tricky and later augmentation and verification of keyframe added more complexity.

Training images even on GTX 1650 took a lot of time and after several crashes I was finally able to train the model and verify the accuracy of the model and test out on real dataset.

File Structure:

app.py – for running the code on video.

wrist_estimator.ipynb – Complete walkdown of model designing

data_convert.ipynb – For converting the data and creating the dataset

- Amit Yadav

- +91 7518844490

- https://github.com/warriorwizard